Dubbing, i.e., the lip-synchronous translation and revoicing of audio-visual media into a target language from a different source language, is essential for the full-fledged reception of foreign audio-visual media.

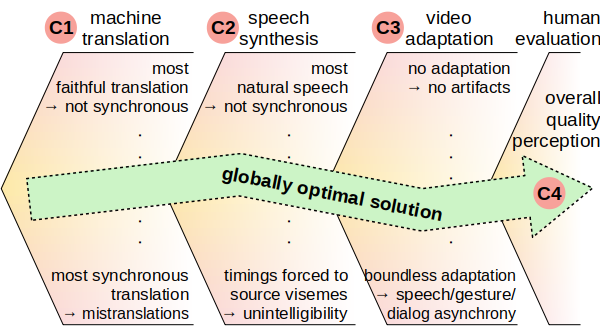

Since 2019, I am working on a project to automate dubbing, with the global optimization across machine translation, performative speech synthesis, and potential video adaptation, with the goal of yielding the best-possible quality perception for the consumer of the video.

Collaborators:

- Fondazione Bruno Kessler (Alina Karakanta, Matteo Negri and Marco Turchi)

- Ashutosh Saboo (research intern)

- Shravan Nayak (research intern)

- Supratik Bhattacharya (research intern)

- Debjoy Saha (research intern)

- Kommineni Aditya (research intern)

Related Publications:

- Alina Karakanta, Supratik Bhattacharya, Shravan Nayak, Timo Baumann, Matteo Negri and Marco Turchi (2020).

"The Two Shades of Dubbing in Neural Machine Translation".

in: Proceedings of COLING 2020..

DOI, URL, bibtex - Shravan Nayak, Timo Baumann, Supratik Bhattacharya, Alina Karakanta, Matteo Negri and Marco Turchi (2020).

"See me speaking? Differentiating on whether words are spoken on screen or off to optimize machine dubbing".

in: Proceedings of the 1st International Workshop on Deep Video Understanding..

DOI, pdf, video, bibtex - Ashutosh Saboo and Timo Baumann (2019).

"Integration of Dubbing Constraints into Machine Translation".

in: Proceedings of the Fourth Conference on Machine Translation (Volume 1: Research Papers). Florence, Italy. Association for Computational Linguistics, pages 94-101.

DOI, URL, bibtex - Timo Baumann and Ashutosh Saboo (2021).

"Evaluating Heuristics for Audio-Visual Translation".

in: Proceedings of the Conference on Computational Humanities Research 2021. Amsterdam, the Netherlands. (Maud Ehrmann, Folgert Karsdorp, Melvin Wevers, Tara Lee Andrews, Manuel Burghardt, Mike Kestemont, Enrique Manjavacas, Michael Piotrowski and Joris van Zundert, Eds.) Pages 171-180.

URN, URL, pdf, bibtex

Page last modified on November 26, 2021, at 03:46 PM

Powered by

PmWiki